首先声明,这里的权值共享指的不是CNN原理中的共享权值,而是如何在构建类似于Siamese Network这样的多分支网络,且分支结构相同时,如何使用keras使分支的权重共享。

Functional API

为达到上述的目的,建议使用keras中的Functional API,当然Sequential 类型的模型也可以使用,本篇博客将主要以Functional API为例讲述。

keras的多分支权值共享功能实现,官方文档介绍

上面是官方的链接,本篇博客也是基于上述官方文档,实现的此功能。(插一句,keras虽然有中文文档,但中文文档已停更,且中文文档某些函数介绍不全,建议直接看英文官方文档)

不共享参数的模型

以MatchNet网络结构为例子,为方便显示,将卷积模块个数减为2个。首先是展示不共享参数的模型,以便观看完整的网络结构。

整体的网络结构如下所示:

代码包含两部分,第一部分定义了两个函数,FeatureNetwork()生成特征提取网络,ClassiFilerNet()生成决策网络或称度量网络。网络结构的可视化在博客末尾。在ClassiFilerNet()函数中,可以看到调用了两次FeatureNetwork()函数,keras.models.Model也被使用的两次,因此生成的input1和input2是两个完全独立的模型分支,参数是不共享的。

from keras.models import Sequential

from keras.layers import merge, Conv2D, MaxPool2D, Activation, Dense, concatenate, Flatten

from keras.layers import Input

from keras.models import Model

from keras.utils import np_utils

import tensorflow as tf

import keras

from keras.datasets import mnist

import numpy as np

from keras.utils import np_utils

from keras.callbacks import EarlyStopping, ModelCheckpoint, TensorBoard, ReduceLROnPlateau

from keras.utils.vis_utils import plot_model

# ---------------------函数功能区-------------------------

def FeatureNetwork():

"""生成特征提取网络"""

"""这是根据,MNIST数据调整的网络结构,下面注释掉的部分是,原始的Matchnet网络中feature network结构"""

inp = Input(shape = (28, 28, 1), name='FeatureNet_ImageInput')

models = Conv2D(filters=24, kernel_size=(3, 3), strides=1, padding='same')(inp)

models = Activation('relu')(models)

models = MaxPool2D(pool_size=(3, 3))(models)

models = Conv2D(filters=64, kernel_size=(3, 3), strides=1, padding='same')(models)

# models = MaxPool2D(pool_size=(3, 3), strides=(2, 2))(models)

models = Activation('relu')(models)

models = Conv2D(filters=96, kernel_size=(3, 3), strides=1, padding='valid')(models)

models = Activation('relu')(models)

models = Conv2D(filters=96, kernel_size=(3, 3), strides=1, padding='valid')(models)

models = Activation('relu')(models)

models = Flatten()(models)

models = Dense(512)(models)

models = Activation('relu')(models)

model = Model(inputs=inp, outputs=models)

return model

def ClassiFilerNet(): # add classifier Net

"""生成度量网络和决策网络,其实maychnet是两个网络结构,一个是特征提取层(孪生),一个度量层+匹配层(统称为决策层)"""

input1 = FeatureNetwork() # 孪生网络中的一个特征提取

input2 = FeatureNetwork() # 孪生网络中的另一个特征提取

for layer in input2.layers: # 这个for循环一定要加,否则网络重名会出错。

layer.name = layer.name + str("_2")

inp1 = input1.input

inp2 = input2.input

merge_layers = concatenate([input1.output, input2.output]) # 进行融合,使用的是默认的sum,即简单的相加

fc1 = Dense(1024, activation='relu')(merge_layers)

fc2 = Dense(1024, activation='relu')(fc1)

fc3 = Dense(2, activation='softmax')(fc2)

class_models = Model(inputs=[inp1, inp2], outputs=[fc3])

return class_models

# ---------------------主调区-------------------------

matchnet = ClassiFilerNet()

matchnet.summary() # 打印网络结构

plot_model(matchnet, to_file='G:/csdn攻略/picture/model.png') # 网络结构输出成png图片

共享参数的模型

FeatureNetwork()的功能和上面的功能相同,为方便选择,在ClassiFilerNet()函数中加入了判断是否使用共享参数模型功能,令reuse=True,便使用的是共享参数的模型。

关键地方就在,只使用的一次Model,也就是说只创建了一次模型,虽然输入了两个输入,但其实使用的是同一个模型,因此权重共享的。

from keras.models import Sequential

from keras.layers import merge, Conv2D, MaxPool2D, Activation, Dense, concatenate, Flatten

from keras.layers import Input

from keras.models import Model

from keras.utils import np_utils

import tensorflow as tf

import keras

from keras.datasets import mnist

import numpy as np

from keras.utils import np_utils

from keras.callbacks import EarlyStopping, ModelCheckpoint, TensorBoard, ReduceLROnPlateau

from keras.utils.vis_utils import plot_model

# ----------------函数功能区-----------------------

def FeatureNetwork():

"""生成特征提取网络"""

"""这是根据,MNIST数据调整的网络结构,下面注释掉的部分是,原始的Matchnet网络中feature network结构"""

inp = Input(shape = (28, 28, 1), name='FeatureNet_ImageInput')

models = Conv2D(filters=24, kernel_size=(3, 3), strides=1, padding='same')(inp)

models = Activation('relu')(models)

models = MaxPool2D(pool_size=(3, 3))(models)

models = Conv2D(filters=64, kernel_size=(3, 3), strides=1, padding='same')(models)

# models = MaxPool2D(pool_size=(3, 3), strides=(2, 2))(models)

models = Activation('relu')(models)

models = Conv2D(filters=96, kernel_size=(3, 3), strides=1, padding='valid')(models)

models = Activation('relu')(models)

models = Conv2D(filters=96, kernel_size=(3, 3), strides=1, padding='valid')(models)

models = Activation('relu')(models)

# models = Conv2D(64, kernel_size=(3, 3), strides=2, padding='valid')(models)

# models = Activation('relu')(models)

# models = MaxPool2D(pool_size=(3, 3), strides=(2, 2))(models)

models = Flatten()(models)

models = Dense(512)(models)

models = Activation('relu')(models)

model = Model(inputs=inp, outputs=models)

return model

def ClassiFilerNet(reuse=False): # add classifier Net

"""生成度量网络和决策网络,其实maychnet是两个网络结构,一个是特征提取层(孪生),一个度量层+匹配层(统称为决策层)"""

if reuse:

inp = Input(shape=(28, 28, 1), name='FeatureNet_ImageInput')

models = Conv2D(filters=24, kernel_size=(3, 3), strides=1, padding='same')(inp)

models = Activation('relu')(models)

models = MaxPool2D(pool_size=(3, 3))(models)

models = Conv2D(filters=64, kernel_size=(3, 3), strides=1, padding='same')(models)

# models = MaxPool2D(pool_size=(3, 3), strides=(2, 2))(models)

models = Activation('relu')(models)

models = Conv2D(filters=96, kernel_size=(3, 3), strides=1, padding='valid')(models)

models = Activation('relu')(models)

models = Conv2D(filters=96, kernel_size=(3, 3), strides=1, padding='valid')(models)

models = Activation('relu')(models)

# models = Conv2D(64, kernel_size=(3, 3), strides=2, padding='valid')(models)

# models = Activation('relu')(models)

# models = MaxPool2D(pool_size=(3, 3), strides=(2, 2))(models)

models = Flatten()(models)

models = Dense(512)(models)

models = Activation('relu')(models)

model = Model(inputs=inp, outputs=models)

inp1 = Input(shape=(28, 28, 1)) # 创建输入

inp2 = Input(shape=(28, 28, 1)) # 创建输入2

model_1 = model(inp1) # 孪生网络中的一个特征提取分支

model_2 = model(inp2) # 孪生网络中的另一个特征提取分支

merge_layers = concatenate([model_1, model_2]) # 进行融合,使用的是默认的sum,即简单的相加

else:

input1 = FeatureNetwork() # 孪生网络中的一个特征提取

input2 = FeatureNetwork() # 孪生网络中的另一个特征提取

for layer in input2.layers: # 这个for循环一定要加,否则网络重名会出错。

layer.name = layer.name + str("_2")

inp1 = input1.input

inp2 = input2.input

merge_layers = concatenate([input1.output, input2.output]) # 进行融合,使用的是默认的sum,即简单的相加

fc1 = Dense(1024, activation='relu')(merge_layers)

fc2 = Dense(1024, activation='relu')(fc1)

fc3 = Dense(2, activation='softmax')(fc2)

class_models = Model(inputs=[inp1, inp2], outputs=[fc3])

return class_models

如何看是否真的是权值共享呢?直接对比特征提取部分的网络参数个数!

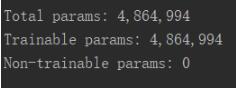

不共享参数模型的参数数量:

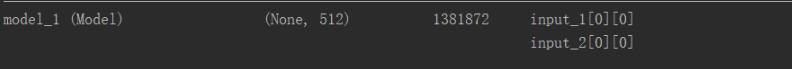

共享参数模型的参数总量

共享参数模型中的特征提取部分的参数量为:

由于截图限制,不共享参数模型的特征提取网络参数数量不再展示。其实经过计算,特征提取网络部分的参数数量,不共享参数模型是共享参数的两倍。两个网络总参数量的差值就是,共享模型中,特征提取部分的参数的量

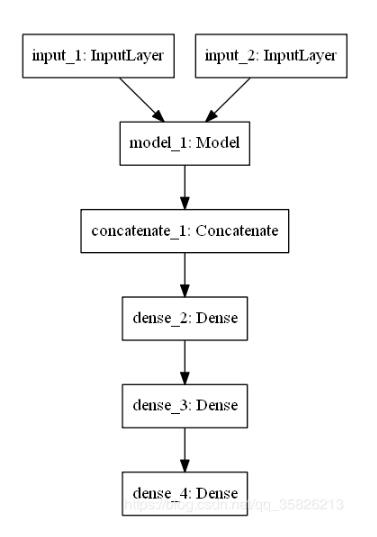

网络结构可视化

不共享权重的网络结构

共享参数的网络结构,其中model_1代表的就是特征提取部分。

以上这篇使用keras实现孪生网络中的权值共享教程就是小编分享给大家的全部内容了,希望能给大家一个参考,也希望大家多多支持自学编程网。

- 本文固定链接: https://zxbcw.cn/post/188481/

- 转载请注明:必须在正文中标注并保留原文链接

- QQ群: PHP高手阵营官方总群(344148542)

- QQ群: Yii2.0开发(304864863)